Elephants Still Don’t Play Chess

Why information gathering actions are key to a robot's success.

In 1990, Rod Brooks published “Elephants Don’t Play Chess” in Robotics and Autonomous Systems. He was reacting against 30 years of research in symbolic methods, which had failed to achieve human-level intelligence. One of the crowning achievements of this work was the ability to play chess using classic AI techniques such as breadth-first search and alpha-beta pruning that culminated in successes such as IBM’s DeepBlue.

But Good Old Fashioned AI (GOFAI) assumed that the underlying board state was provided to the system as a symbolic input. They consciously scoped out the problem of mapping from higher-dimensional perceptual input to the lower-dimensional game state, a wise choice given the computational resources available at that time. In Chess, whether it’s a wooden Staunton set, a plastic tournament set, a screenshot from chess.com, or a textual list of moves, all represent the same underlying game state. Yet these representations have radically different visual appearances.

ChatGPT has largely solved this problem. It can quickly and accurately translate an image of a chessboard into a symbolic representation and then reason about game state, board positions, and next moves for all of these very different images.

But what happens when the representation is unfamiliar?

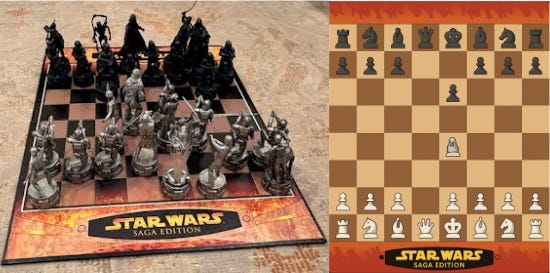

I got my nephew a vintage Star Wars chess set for his birthday. (You can find good things at SwapFest.) Yoda is the white king, and Emperor Palpatine is the black king. The pieces are detailed figurines of characters . We set up a game, and almost immediately ran into a problem: we couldn’t tell what pieces were which. Is Chewbacca a bishop or a knight? The Stormtrooper could be a pawn or a rook.

ChatGPT has the same problem. When I showed it a picture of the Star Wars board, it couldn’t reliably render the game state. It asked for clarification about which pieces mapped to which symbols. The mapping that works so well for standard chess sets breaks down when the visual vocabulary is unfamiliar.

(left) My nephew and I mid-game on the Star Wars Saga Edition chess set

(right) ChatGPT5.2’s attempt at rendering the game state (generated 12/15/2025)

Here’s what my nephew and I did when we got confused: we picked up the piece and looked at the base. Each figurine has a small chess symbol printed on the base. Chewbacca is a knight. The Stormtrooper is a pawn. Problem solved.

This is an information-gathering action. It requires moving an end effector to a specific location in the world, manipulating an object, and focusing on new information that wasn’t previously visible. It’s a simple behavior, something a child does without thinking. But it’s precisely the kind of output that ChatGPT cannot generate. ChatGPT can ask a person to flip the piece over. It can request clarification. But it cannot, itself, produce the high-dimensional motor output necessary to collect this information on its own. It can reason about chess at a high level, but it cannot take the physical action that would resolve its uncertainty, at least not yet.

This connects to the embodiment gap that David and I described in our Grounded Turing Test work. There is a facet of intelligence that involves processing high-dimensional sensor input and producing high-dimensional actuator output to perform goal-directed behavior in the physical world. LLMs are far on one side of this spectrum; crows, dogs, and three-year-olds are on the other.

To create robots that can perform these behaviors, we need methods such as reinforcement learning that enable the robot to discover actions outside the demonstration distribution. We need representations like Partially Observable Markov Decision Processes that explicitly model what the agent knows and what it doesn’t. These frameworks describe a robot capable of reasoning about its own uncertainty and taking actions specifically to reduce it.

Brooks’ critique from 1990 still points to something real. The boundary has moved. Embodied intelligence requires the ability to act in the world to gather information, and that remains an open problem.

Thanks to Jessica Hodgkins , Staci Intriligator, and my nephew for their help with this post. All errors and opinions are our own.